The Enterprise Moat Is Moving from Where Data Sleeps to Where AI Agents Work

I’ve spent 3 decades inside enterprise systems of record. Building them and integrating them, watching implementations go sideways because the schema didn’t account for how German bonus components actually work, or because someone assumed “termination date” meant the same thing in California and France. The thing that never changes is that the closer you get to how enterprise data actually moves, the less it resembles the clean diagrams in the architecture docs.

So as I watched the enterprise software market cap evaporate this February, I felt vertigo. Because the thesis being priced in by the market is one I’ve been grappling with for years: the system of record, the thing I spent my career building and integrating, is losing its grip on the enterprise.

By mid-February, the damage had accumulated to an estimated $1 trillion. Salesforce and Workday each down over 40% from their highs.

Jason Lemkin, often considered the godfather of SaaS, offered a diagnosis: the AI crash narrative simply gave the market permission to reprice what fundamentals had been signaling for three years. The correction would have happened eventually but AI was the catalyst and the story.

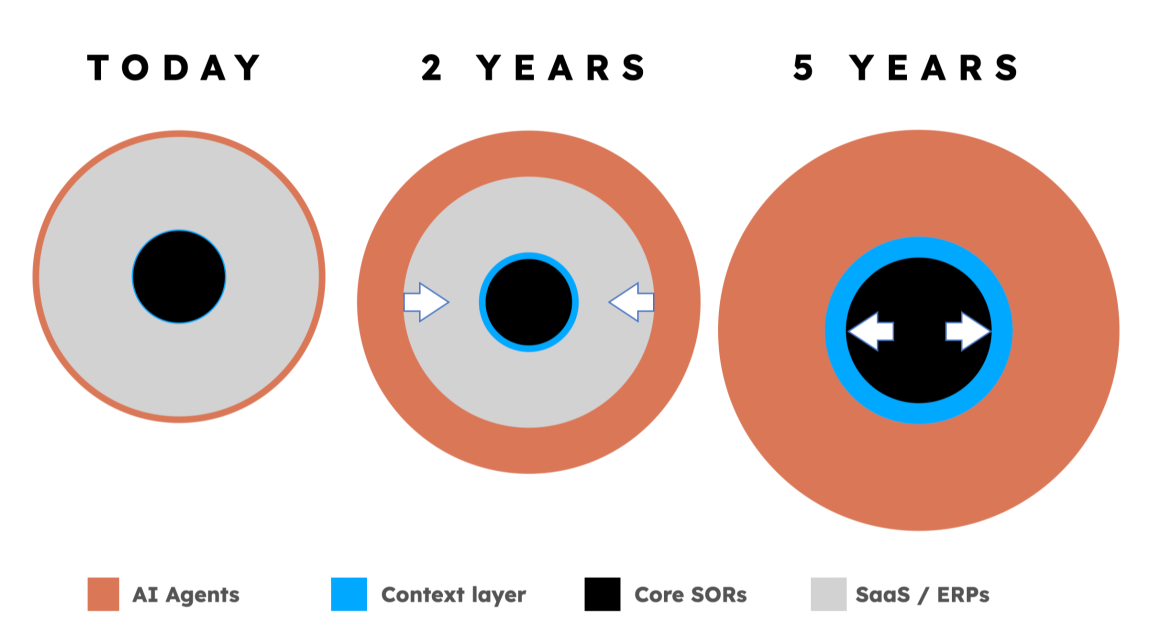

Underneath the valuation correction, something structural is shifting. The system of record, the architectural foundation of every major enterprise software company built in the last thirty years, is beginning to lose its strategic value. Not because the data inside is less important, but because the logic that made SORs defensible is starting to migrate somewhere else.

How SORs Became the Center of Enterprise Software

To understand what’s breaking, you have to understand what SORs actually are and what they’re not. I say this as someone who spent his whole career inside some of the largest.

A system of record is not just a database. It never was. The reason SAP, Salesforce, Workday, and Oracle became trillion-dollar companies is not that they stored rows of data particularly well. The value was in the orchestration logic: the entity relationships, the workflow rules, the cross-object validations, the compliance constraints. The SOR defined how data related to other data, how events triggered other events, and what was allowed to happen under which conditions.

When you bought Workday, you weren’t buying a place to store employee records. You were buying a deeply encoded model of how HR operations work — how a promotion in Germany triggers a different compensation workflow than a promotion in California, how a termination flows through benefits, payroll, access management, and compliance simultaneously. I know this because I’ve mapped those flows field by field, across dozens of countries, and the complexity is staggering.

None of this fits neatly into a universal schema, and every SOR that tries to force it into one creates a new layer of translation problems upstream and downstream.

That orchestration logic — the entity relationships and the business rules that govern them — was the moat. It took decades to encode. It created massive switching costs. And it worked perfectly in a world where humans were the consumers of that logic, navigating it through purpose-built UIs.

That world is ending. Slowly, unevenly, and with far more friction than the AI hype cycle suggests — but it’s ending.

Agents Don’t Navigate UIs. They Need Data.

AI agents can click buttons and navigate menus — there’s an entire generation of RPA and browser automation that proves it. But that’s a band-aid, not an architecture. An agent screen-scraping its way through a Workday UI is doing the digital equivalent of a human reading a spreadsheet aloud to another human so they can type the numbers into a different spreadsheet. (@thomas Otter has made this point repeatedly)

In theory, agents need three things: data, context, and the ability to act. When an AI agent processes a workflow that spans Workday, SAP, a local payroll provider, and a benefits platform, it doesn’t care which system is the “system of record.” It needs to understand what the data means, how it relates across systems, and what rules govern its transformation — regardless of where that data physically lives.

In practice, most large enterprises are still struggling with basic API integration. Procurement cycles, compliance reviews, security assessments, and change management processes don’t move at “agent speed.” The enterprise IT organization that took eighteen months to approve a static integration isn’t going to hand autonomous data access to an AI agent next quarter.

But the direction is clear, even if the timeline is measured in years rather than months. And the architectural implication doesn’t require full agent autonomy to matter. The moment any cross-system orchestration — whether agent-driven or human-managed — needs to operate across SOR boundaries, the same structural problem emerges.

This is the inversion. SORs (and by extension SaaS) were valuable because they concentrated orchestration logic in one place, and humans accessed that logic through the application layer. Cross-system agents — or even just cross-system workflows mediated by AI — bypass the application layer. They need the orchestration logic, but they need it extracted from the SOR, normalized across systems, and made consumable through APIs and tool definitions.

When cross-system orchestration becomes the primary mode of enterprise work, the SOR’s orchestration logic stops being a moat and starts being a wall. Every proprietary entity relationship model, every vendor-specific schema, every closed API becomes friction that agents and integration layers have to work around rather than through.

The SOR doesn’t disappear, but it gets demoted. It becomes what it always was underneath the orchestration logic: a headless database. A persistence layer. The “truth” no longer sits in one DB — it’s spread across the full context of agent-managed transactions.

For those who built their careers on the premise that if you encoded enough domain knowledge into a system of record, you’d created something defensible, this is scary as hell.

The $1 Trillion Question: Where Does the Orchestration Logic Go?

If SORs are becoming headless databases, the question is: where does the orchestration logic migrate to?

The enterprise AI discourse in 2025–2026 has actually produced a consistent answer from multiple independent voices.

In December 2025, Foundation Capital’s Ashu Garg and Jaya Gupta published what became one of the most-discussed theses in enterprise AI: the idea that the next trillion-dollar platforms would be built by capturing “decision traces” — the exceptions, overrides, precedents, and cross-system context that currently live in people’s heads and Slack threads. They call this a “context graph.” Within a month, Dharmesh Shah of HubSpot described it as a system of record for decisions rather than data. Aaron Levie of Box wrote that when everyone has access to the same models, the differentiator becomes organizational knowledge. Arvind Jain of Glean said the concept finally had a name.

Tomasz Tunguz at Theory Ventures calls it the “context database” — a new system of record for AI agents that combines operational and analytical context. Google’s Agent Developer Kit treats context as a first-class system with its own architecture and lifecycle. Microsoft’s Fabric IQ positions its “semantic foundation” as the ontology that gives agents a shared understanding of the business. Gartner elevated the semantic layer to “essential infrastructure” in its 2025 Hype Cycle. Cognizant calls it “context fabric” — the connective tissue between data and decision-making.

The terminology varies. The convergence is unmistakable. The missing layer for agentic AI is not more data access, better pipes, or larger models. It’s context — the semantic understanding that tells an agent what data means, how it should be transformed, and what rules govern its use.

Some of us have been calling this the “Virtual SOR.” It’s not a database, it’s more like a meta-database, the cross-system understanding that agents need to operate.

The idea is that if you own the Virtual SOR, you own the context. And if you own the context, you own the Agentic economy for that domain.

Yes, this sounds like the pitch for every middleware company that’s launched and failed in the last twenty years. “We’ll sit between your systems and make everything work.” The difference is that the previous generation optimized for pipes — how do I get data from A to B? The context layer optimizes for the fluid — what does this data actually mean, and how does its meaning change when it crosses from one system, jurisdiction, or regulatory context to another?

Pipes don’t care what flows through them but the fluid does.

The Jevons Paradox of SORs

My view is that in the agentic era, SORs don’t go away. They proliferate.

This is Jevons’ paradox applied to enterprise software. When agents make it trivially easy to interact with any system, the cost of adding another SOR drops to near zero. Companies will use more systems, not fewer. But the strategic value of any individual SOR drops proportionally, because the orchestration logic — the thing that made each SOR defensible — now lives in the context layer above.

For founders, the dilemma is this: If you’re building at the application layer, you’re competing in a category where defensibility is shrinking. If you’re building at the context layer — accumulating the decision traces and domain knowledge that agents need — the economics look more like data infrastructure than SaaS: deeper moats, longer compounding cycles, but a completely different playbook, financing model, and timeline to value. Most of the startup advice, board expectations, and benchmarking frameworks in our industry were built for SaaS economics. They don’t transfer cleanly.

That’s an uncomfortable conversation most founders haven’t had with their investors yet. (I just started 🙂)

What Context Databases Actually Require

It’s easy to say “the value is in the context layer.” Building one is a different matter — and intellectual honesty demands acknowledging that nobody has fully built this yet.

The reason SORs were defensible was the decades of encoded domain knowledge. The entity relationships in Workday reflect thirty years of learning about how HR operations actually work. The schema in SAP reflects sixty years of manufacturing, supply chain, and financial process knowledge etc…

A context database has to capture the equivalent knowledge, but in a fundamentally different form. Instead of hard-coding entity relationships into a fixed schema, it has to learn the relationships dynamically — across systems, across jurisdictions, across time. It has to understand not just what data exists, but what it means, how it was derived, and what rules govern its transformation.

Foundation Capital’s framework identifies the core asset as “decision traces” — the accumulated record of how rules were applied, where exceptions were granted, and why actions were allowed to happen. This is precisely what traditional SORs never stored. The SOR recorded the outcome. The decision trace records the reasoning.

Personally, I think that for a context database to be defensible, it needs four properties.

First, it must be lossless — preserving the full semantic meaning of data from any source system without compressing it into a lowest-common-denominator schema.

Second, it must be learning — improving its understanding of data relationships with every interaction, correction, and exception.

Third, it must be jurisdiction-aware — understanding that the same data field means different things in different regulatory contexts.

Fourth, it must be agent-consumable — exposing its knowledge through structured tool definitions that any AI agent can invoke with full audit trails (obviously)

We should be clear-eyed about the difficulty here. The most obvious failure mode is the data lake trap: capture everything, learn nothing. A decade of enterprise data lake projects proved that preserving context without structured learning on top just creates a swamp. A context database that degrades into a noisy lake of decision traces is worse than not having one — it gives agents confident-sounding answers drawn from unstructured noise. (looking at you, Grok)

All four properties must exist simultaneously for a context database to be defensible, and they must be trained on real operational data. In regulated industries, this could take the better part of a decade to fully materialize. The question for founders isn’t whether this happens overnight. It’s whether you’re accumulating the raw materials — the decision traces, the cross-system mappings, the exception patterns — that will constitute the context database when the infrastructure catches up. The companies that start accumulating now will have compounding advantages that are impossible to replicate from a standing start. The ones that wait for the architecture to be “ready” will find that the data moat has already been built by someone else.

The Trust Gap

There’s a deeper problem that the “headless database” framing raises, and it’s one that anyone who’s actually implemented enterprise systems will recognize immediately: humans aren’t leaving the loop.

Agents don’t need UIs. But the humans who are accountable for agent actions absolutely do. (Because AIs cannot be held accountable, someone has to)

The EU Pay Transparency Directive doesn’t require that AI agents can process pay equity data — it requires that auditors can verify how pay equity conclusions were reached, across jurisdictions, with full provenance. If the SOR is demoted to a headless persistence layer and the orchestration logic moves to a context layer, that context layer inherits the entire auditability burden. It needs to show every decision trace, every transformation rule, every exception — and it needs to show them in a way that a compliance officer in Frankfurt can understand and sign off on.

This is the trust gap. The context layer can’t just be an API surface for agents. It has to be a better system of record for human oversight than the SOR it’s abstracting. If it isn’t, regulated enterprises won’t adopt it — not because the technology doesn’t work, but because the legal risk is unacceptable. The CISO who signs off on agent-mediated data access needs to know exactly what the agent saw, what it did, and why. The CFO who certifies pay equity compliance needs an audit trail that holds up in court.

The companies that underestimate this — that build beautiful agent-consumable APIs without investing equally in human-readable audit interfaces — will hit a wall in every regulated industry. And regulated industries are where the highest-value enterprise data lives.

The Two-Speed Enterprise

What makes this transition particularly challenging is that it’s happening at two speeds simultaneously — and the gap between them may persist for a long time.

The AI infrastructure layer is moving at startup speed. Hyperscalers plan to spend $660–690 billion on AI infrastructure in 2026, nearly doubling 2025. New agent frameworks, context protocols (MCP), and tool architectures are shipping almost daily. Lemkin’s maxim — that the “80% good enough” agent is worth 10x more than the 99% agent that ships eighteen months later — captures the dynamic in categories where speed-to-market matters more than precision. Consumer workflows, content generation, sales outreach, internal tooling.

But there are entire domains where 80% accuracy is a liability, not an advantage. I know this from painful experience. In payroll, an 80% accurate agent miscalculates statutory deductions in every country you operate in. The “first mover wins” logic assumes that the cost of imprecision is low and recoverable. In regulated enterprise domains (HCM, finance, healthcare, legal etc) the cost of imprecision is lawsuits, regulatory fines, and destroyed trust. Being first matters less than being right.

This creates a paradox at the heart of the agent economy. The domains where agent-mediated orchestration creates the most value (complex, cross-system, regulation-heavy) are precisely the domains where the “ship fast, iterate” playbook is most dangerous. The context layer in these domains doesn’t just need to be fast and agent-consumable — it needs to be provably correct. That requirement dramatically narrows who can build it and how quickly it can be deployed.

The enterprise data layer is moving at enterprise speed. Thirty thousand SAP customers have to migrate from on-premise to cloud. Seven thousand Workday customers need payroll connectivity across fragmented provider landscapes. The EU Pay Transparency Directive hits enforcement in 2026, requiring audit-proof pay equity across systems that were never designed to talk to each other. These migrations take years. The compliance requirements compound. The security reviews alone for agent-mediated data access will occupy enterprise IT teams well into the next decade.

This matters because the conventional startup playbook — move fast, capture the market, iterate — runs headfirst into enterprise procurement reality. A company can build an agent framework in weeks. Getting that agent production access to a Fortune 500’s Workday instance will take twelve to eighteen months of security review, data governance approval, and legal negotiation. The infrastructure moves fast. The adoption moves slow. And the data you need to build a defensible context layer lives behind the slow-moving gates.

The companies that will capture disproportionate value are the ones that bridge this gap — not by waiting for enterprises to be “ready for agents,” but by solving the cross-system data problems that enterprises already have and accumulating context as a byproduct. The context database doesn’t arrive as a greenfield deployment. It emerges from solving real integration pain, one decision trace at a time, inside the existing enterprise reality.

What This Means for Founders

I’m writing this mostly for myself, to help me understand (and therefore sleep better). But if you’re building an enterprise software company right now, the strategic question has shifted. It’s no longer “how do I build a better application?” It’s “how do I accumulate context that agents can’t generate on their own?”

Models are commoditizing. Agents are commoditizing. The application layer is being compressed. What’s not commoditizing is domain-specific context — the accumulated understanding of what data means in a specific domain, how it should be transformed, and what rules govern its use. That understanding has to be earned through real operational data, real exception handling, and real cross-system integration experience. There are no shortcuts. (I’ve looked)

If your product is primarily a UI on top of data that agents could access directly, you are on the wrong side of this transition (this should already be clear by now). If your moat is “we have a nice interface and good workflows,” agents are about to route around you. If your pricing model is per-seat and your value proposition assumes human users navigating your application, you should be worried.

The companies that win will be the ones building what Foundation Capital describes as the “new systems of record that emerge from orchestration layers” — systems that started by solving cross-system data problems and ended up accumulating the context that agents need to operate reliably.

The SOR isn’t dead. The data still needs to live somewhere. But the moat is migrating. It’s moving from where data sleeps to where agents work. From entity relationships locked inside vendor schemas to decision traces that compound across every workflow, every system, and every correction. The migration will be slower than the AI hype cycle suggests but faster than most enterprise incumbents are prepared for.

I don’t have all the answers here. (I’m only hoping to be directionally correct) But the question every enterprise founder needs to sit with is: Are you building applications, or are you building context?

The repricing suggests the market is starting to figure out which one it values.

References

The SaaSpocalypse & Market Repricing

- Jason Lemkin, “SaaS Isn’t Dead. But the Way You Used to Win in B2B? That’s Gone.” SaaStr, February 22, 2026. https://www.saastr.com/saas-isnt-dead-but-the-way-you-used-to-win-in-b2b-thats-gone/

- “The 2026 SaaS Apocalypse: Why Wall Street Is Dumping Software Stocks.” AI 2 Work, February 14, 2026. https://ai2.work/technology/the-2026-saas-apocalypse-why-wall-street-is-dumping-software-stocks/

- Dave Friedman, “The SaaSpocalypse Is a Credit Event, Not a Tech Story.” Substack, February 2026. https://davefriedman.substack.com/p/the-saaspocalypse-is-a-credit-event

- “$150/Seat × 0 Humans = ? | The End of Per-Seat SaaS?” Substack, February 2026. https://sderosiaux.substack.com/p/150seat-0-humans-the-end-of-per-seat

Context Graphs & Decision Traces

- Jaya Gupta & Ashu Garg, “AI’s Trillion-Dollar Opportunity: Context Graphs.” Foundation Capital, December 22, 2025. https://foundationcapital.com/context-graphs-ais-trillion-dollar-opportunity/

- Ashu Garg, “Context Graphs, One Month In.” Foundation Capital, January 31, 2026. https://foundationcapital.com/context-graphs-one-month-in/

- Ashu Garg, “Why Context Graphs Are the Missing Layer for AI.” Foundation Capital podcast, January 16, 2025. https://foundationcapital.com/why-are-context-graphs-are-the-missing-layer-for-ai/

Context Databases

- Tomasz Tunguz, “The Two Context Databases Powering Enterprise AI.” Theory Ventures blog, December 10, 2025. https://tomtunguz.com/operational-analytical-context-databases/

- Tomasz Tunguz, “The Future of AI Data Architecture.” Theory Ventures blog, September 28, 2025. https://tomtunguz.com/future-ai-data-architecture-enterprise-stack/

- datascalehr www.datascalehr.com “Global HCM context layer”

Semantic Layers & Enterprise AI Infrastructure

- Microsoft, “Fabric IQ: The Semantic Foundation for Enterprise AI.” Microsoft Fabric Blog, November 2025. https://blog.fabric.microsoft.com/en-us/blog/introducing-fabric-iq-the-semantic-foundation-for-enterprise-ai

- Microsoft, “From Data Platform to Intelligence Platform: Introducing Microsoft Fabric IQ.” Microsoft Fabric Blog, November 2025. https://blog.fabric.microsoft.com/en-us/blog/from-data-platform-to-intelligence-platform-introducing-microsoft-fabric-iq

- Gartner, 2025 Hype Cycle for Analytics and Business Intelligence — semantic layer elevated to essential infrastructure. (Paywalled; summary via AtScale: https://www.atscale.com/blog/semantic-layer-2025-in-review/)

Lemkin on AI Agents & SaaS Operations

- Jason Lemkin on Lenny’s Podcast, “We Replaced Our Sales Team with 20 AI Agents — Here’s What Happened Next.” January 2026. https://www.lennysnewsletter.com/p/we-replaced-our-sales-team-with-20-ai-agents